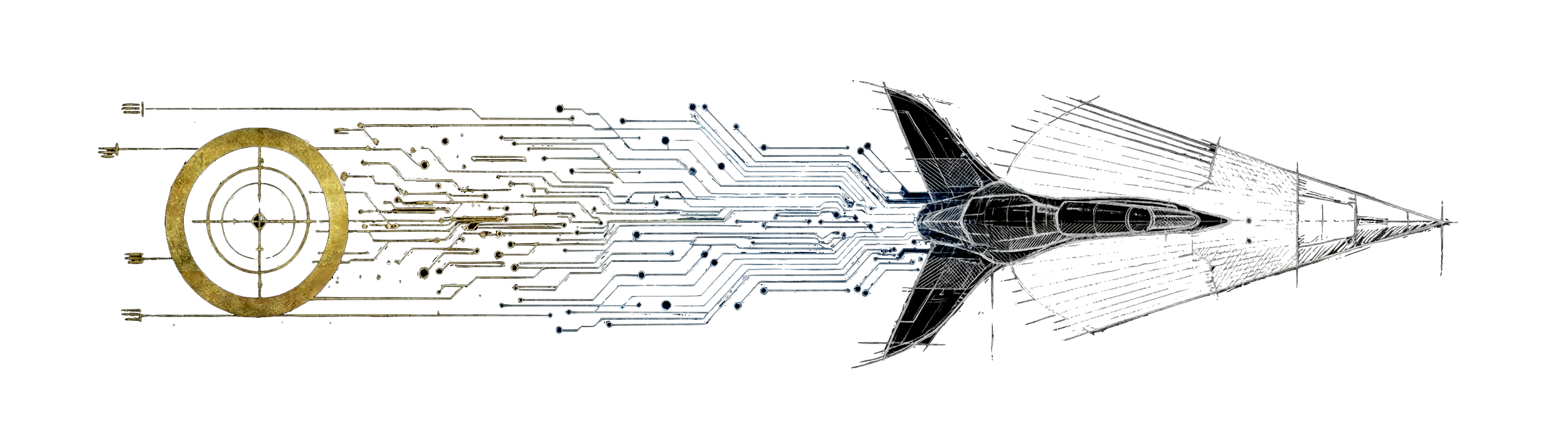

The coming age of supersonic, human-driven, discovery

We are not in the old world anymore.

The coming age of discovery can remain human-centric—but only if we make it so. Autonomy is not impossible. The question is whether humans remain inside the loop that connects discovery, execution, and economic value.

Machine intelligence has pushed us into a new regime. Iteration is cheap. Expertise is accessible. The distance from idea to implementation has collapsed. We are no longer standing on the shoulders of giants—we are in conversation with them. Combined human knowledge is no longer a static archive but active partner: tangible, alive.

This changes what gets discovered, how value compounds, and who remains accountable.

The transonic phase

We are in a transitional phase where speed outruns established methods of control and understanding. We call this phase transonic.

In aerodynamics, the transonic region is unstable by nature. Shockwaves form. Lift disappears. Control surfaces fail. You cannot fly through it using subsonic methods - the airframe and control systems must be redesigned for the new regime.

The same is true for discovery:

Old habits fail.

Institutions built for slower cycles break.

Optimisation outruns comprehension.

Moving faster without adapting does not lead to progress. It leads to wreckage without understanding or insight.

What discovery is (and isn't)

Discovery is not output.

Discovery is understanding that survives contact with reality—understanding that can be explained, tested, reproduced, and acted on. Outputs are cheap. Accountability is not.

AI accelerates exploration and execution, but it does not relieve humans of responsibility. Humans remain accountable for:

Choosing which problems matter.

Specifying objectives and constraints.

Validating against reality.

Deciding how value is created and distributed.

When we delegate understanding, we do not just move faster—we make ourselves optional.

The problems we work on

We focus on hard problems. Riemann-hard problems.

By this we mean problems that:

Resist shallow optimisation.

Expose the limits of existing tools and intuitions.

Require new representations or conceptual frames.

Unlock nonlinear value when understood.

These are problems where progress is not a matter of scale or brute force, but of insight. Problems where speed alone is dangerous, and understanding is the bottleneck.

We are interested in such problems precisely because they force humans to remain in the loop.

How we work in the transonic phase

We move fast, but we treat speed itself as a governance problem.

Models generate hypotheses, not answers.

Reality contact is mandatory: experiments, users, data, deployment.

Understanding gates exist at irreversible points.

Human judgment remains where failure is costly or objectives are underspecified.

We build enough of the system to understand it, not just operate it.

Human-in-the-loop is not a preference. It is a risk control.

Why this matters

As discovery and execution accelerate, incentives shift. Closed loops form. Systems begin to propose, build, deploy, and reinvest faster than humans can intervene.

The danger is not dramatic failure. The danger is gradual drift:

Loss of understanding

Loss of agency

Loss of accountability

That is how control leaves the loop without anyone explicitly choosing it. Once at this speed, returning to unaugmented reasoning is not slow. It's impossible. The old airframe cannot do what the new regime demands.

Beyond transonic

Beyond the transonic phase lies a stable regime where AI accelerates discovery and execution, humans remain accountable, and compounding advantage does not silently become compounding control.

That future is not automatic. It is a design choice.

We get there by flying through the instability, not around it.

This is our work.

Attila & Matthew. The founders - CriticalZero